Law as Hypothesis Testing

Adaptive Trials for Open Societies

Problem: Good Enough For Government work

It is perhaps overly simplistic to chart the inversion of meaning in the phrase “good enough for government work.” Nonetheless, one of the underlying intuitions behind my thinking is that the loss of faith in democracy is partly due to the fact that, while government programs have undoubtedly been impactful and have effected meaningful change, their impact often falls short of their aspirations. The very visible gap between intent and execution leads many to believe that government does not consistently deliver well-designed, well-implemented programs or demonstrate clear strategic thinking. The roots for this are many and varied:

Zero-sum Contest: The majority endeavors to force through their agenda at all costs while in power, while the minority makes every effort to block their efforts.

Horse Trading and Partisanship: Compromises and trade-offs dilute the original intent of laws, leading to legislation that serves political expediency rather than the public interest.

Noise over Nuance: Buzz-worthy hammers are favored over the complex process of defining, testing, and revising hypotheses.

Confirmation Bias: Policymakers may overlook critical data in favor of information that confirms their preconceptions.

Lobbying and Influence: Special interest groups can unduly influence legislation, pushing for laws that benefit a few at the expense of the general welfare.

Opacity and Accountability: Processes by which laws are negotiated and enacted often lack transparency hindering public accountability.

Short Election Cycles: Politicians focus on short-term wins rather than long-term solutions due to upcoming elections.

Lack of Interdisciplinary Approaches: Lawmaking often lacks input from diverse fields, leading to solutions that don't fully address multifaceted problems.

Complexity of Modern Societies: The interdependence of global systems can overwhelm the legislative process, leading to blunt solutions.

Inadequate Data and Research: Policies might be based on incomplete or outdated information, lacking a robust empirical foundation.

Principal / Agent misalignment: The incentives of those responsible for enacting policy may not align with those who are responsible for creating legislation.

One of the core reasons for the disconnect between the intent and execution of government programs, I believe, lies in what I call “leaky hypotheses” that are endemic in law and policy crafting. At its core, every piece of legislation embodies a hypothesis: we don't define background checks, set welfare entitlements, or forgive student loans arbitrarily. In each case, there's a hypothesis being tested, such as "Background checks for assault weapons will reduce violent crime," or "Welfare entitlements will protect our most vulnerable citizens while not disincentivizing work."

However, the journey from legislative drafting to implementation often introduces "leaks" in these hypotheses due to several key reasons:

Lack of Clear Definition: The hypothesis behind a law is frequently not explicitly stated, leaving room for ambiguity, evasion, goalpost shifting, and varied interpretations and enforcements.

Compromised Execution and Atomicity: The legislative process is marked by negotiations and compromises which dilute the law's focus and effectiveness. As a result, the original hypothesis can become fragmented, muddied, or even inverted. This often occurs when laws are combined and mutated along with other legislative aims to aid passage, sometimes resulting in the dreaded omnibus bills.

Inadequate Data Collection and Experimentation: Robust frameworks for data collection and analysis are seldom attached to laws, making it difficult to measure their impact accurately or adapt based on empirical findings.

Absence of Parsimony: Legislation often becomes encumbered with extraneous elements that do not serve the core hypothesis, complicating the assessment of the law's effectiveness in achieving its intended goal.

Inconsistent Implementation: Once passed legislation moves to enactment and enforcement, which may lead to differing implementation and adherence between different jurisdictional or organizational entities tasked with implementing legislation.

The legislative process introduces intricacies and complications that lead to a significant divergence from the original aims. Watered-down provisions, state-level implementation variations, and the absence of a consistent, reliable feedback mechanism exacerbate this divergence. Lacking an experimental framework to test and validate underlying assumptions, policies become subject to a cycle of debate, uneven enactment, and contention that fails to advance understanding or effectiveness.

In this cycle, advocates claim that the altered law didn't fulfill its original intent, while opponents declare its failure. Both sides then return to square one, each aiming to force through winner-take-all legislation to enact their agenda and repeal that of their opponents. As a result, the balance of power may shift, but the argument remains static.

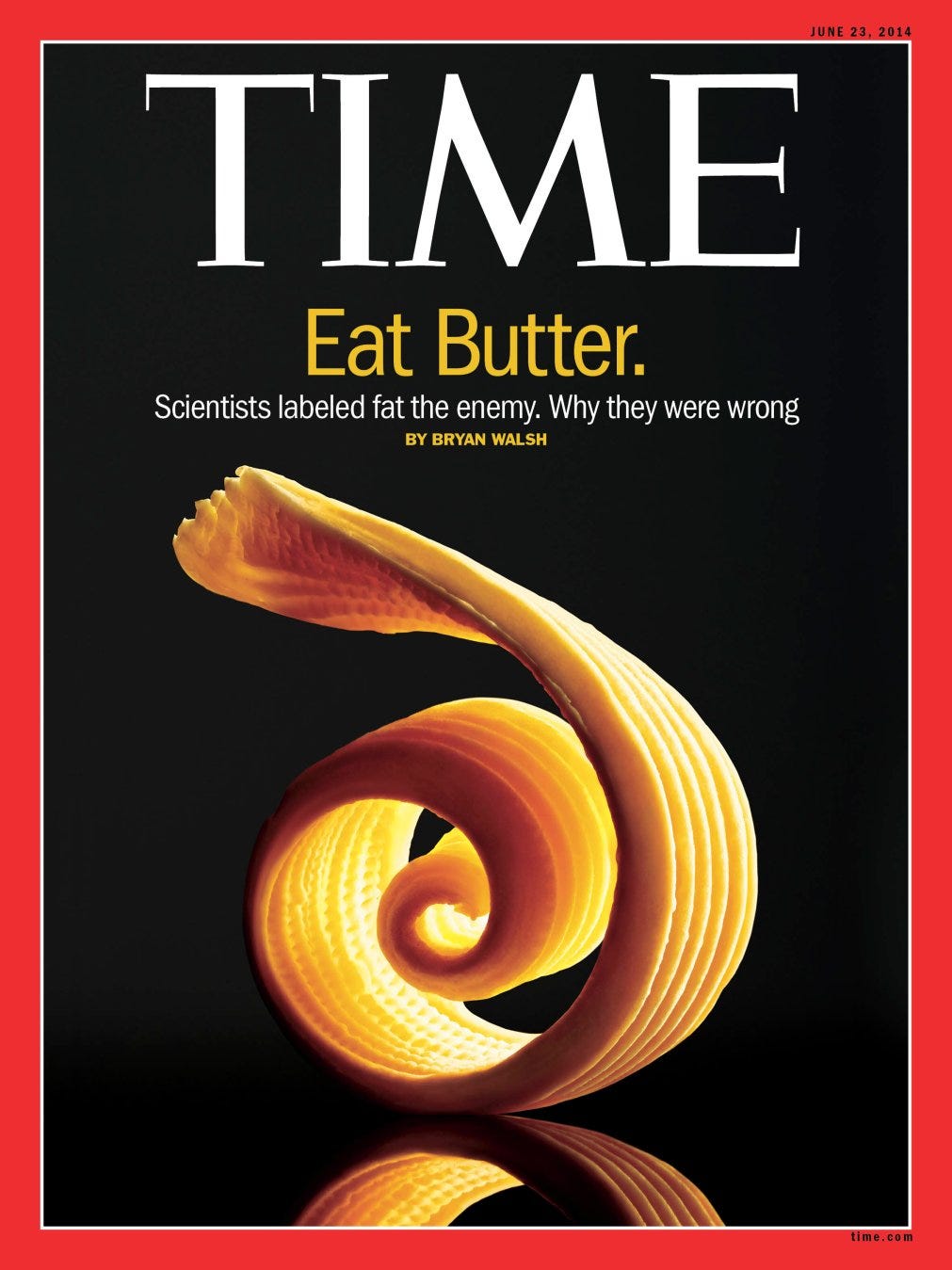

This approach all but guarantees that the original “leaky” hypothesis will be re-litigated unchanged in the future. The vicious and inescapable cycle continues: the same arguments are repeated, but without the benefit of evidence and experimentation to evolve the dialogue or policy. Even when new evidence emerges it is often treated with the credulity of a TIME magazine cover rather than the continuous agglomeration, synthesis, and interpretation of a scientific approach.

The underlying “real” hypotheses of legislation remain in stasis, untested and unrefined. This environment makes it nearly impossible to consistently and systematically craft laws that clearly and effectively address the challenges they intend to solve and can adapt based on new data and changing conditions. Consequently, we perpetuate a system of governance that struggles to evolve and adapt to the needs and complexities of modern society.

As I’ve noted elsewhere, government stands in stark contrast to scientific and technological endeavors which, despite their flaws, are able to evolve their conversation and shared understanding due to a firm empirical bent. In these other fields, the iterative, cyclical process of hypothesis generation, testing, and refinement drives progress. The challenge and opportunity for us lie in applying this empirical rigor to the craft of governance, transforming how laws are made, applied, and evolved.

Correctness Conditions: Scientific Policy

Having delineated our problem, we can define the contours of our solution. As always with the caveat that pragmatism should temper the aspirational nature of these rules.

Clear and Testable Hypotheses: Policies should articulate a clear and testable hypothesis, setting measurable objectives that facilitate the evaluation of the policy's impact. This clarity allows policymakers and the public to understand the intended outcomes and assess whether those outcomes are being achieved. Pedant’s note, I say accept the hypothesis because that’s the accepted language but more formally the goal should always be to reject the null hypothesis. In addition, hypotheses should always be evolving as new data and learnings emerge.

Parsimony and Atomicity in Policy Design: Policies should aim to be parsimoniously atomic, addressing target issues without needlessly muddying analysis. This approach prevents the convolutions typical of omnibus bills, ensuring that each piece of legislation has a focused, direct, and measurable impact.

Incorporation of Experimental Design: Each law and policy must include an experimental framework for systematic data collection and analysis. This empirical approach not only enables testing and validating the policy's underlying hypotheses but also creates a powerful flywheel effect. Data gathered from previous legislation can generate new hypotheses, provide unexpected insights, and help other jurisdictions accelerate their policy development based on prior art and data. This encourages the spread of good experimental design and testing methodologies across jurisdictions as well.

Path for Experimental Improvement: Beyond initial design, laws and policies should provide a clear path for iterative improvement based on empirical findings. This adaptability allows policies to evolve in response to new data, ensuring that legislation remains relevant and effective in a changing world.

Parsimony in Law and Policy: In addition to being parsimoniously atomic in design, policies should also minimize regulatory, compliance, and paperwork that obstruct testing and evaluation while making the policies less accessible and adding administrative overhead. Keeping the overhead of any policy parsimoniously light improves accessibility and prevents administration of the policy from becoming a confounding factor. Following Occam's razor, strive for simplicity and directness in policy implementation to avoid unnecessary barriers that hinder the empirical evaluation and iterative improvement of legislation. This balance requires a careful evaluation of each policy component, ensuring that every element serves a necessary function without adding undue complexity.

Solution: Law as Hypothesis Testing

The way I propose to do this is through a framework I call law as hypothesis testing. At its core is a simple conceit: Every law can be reformulated as a testable hypothesis. For instance, "If it is illegal to purchase semi-automatic weapons in the state of California, fewer people will acquire them, and this will reduce the casualties of mass shootings." Similarly, the opposition to this could be couched as a counter-hypothesis: “If it is illegal to purchase semi-automatic weapons, criminals will be emboldened, and rates of assaults and home invasions will increase.”

Making the hypothesis clear and testable offers numerous benefits. It forces advocates and opponents to think about the outcomes critically, potentially making it easier to craft effective policy. It aligns legislators and administrators around designing the correct study rather than fixating on a policy they agree with. Instead of bickering about who is right, the conversation shifts to what's the best way to prove it. Enemies are turned into co-conspirators in the pursuit of truth.

If your objective is to reduce gun violence, you may discover that focusing on assault weapons is a misdirection, as the newsworthiness of mass shootings is outweighed by the more pedestrian march of shootings, accidents, and suicides.

A clear hypothesis also weeds out bad actors and special interests. These groups have no place to hang their hat since they must propose a hypothesis they believe to be supportable forcing them to either declare their interests outright or desperately retreat against a tide of overwhelming evidence.

Notice how this aligns the incentives of legislators. Whether I agree or disagree with the core policy proposal, I have a stake in proposing an alternate hypothesis and ensuring good experimental design (which I believe will validate my hypothesis). My objection to the policy is tempered by the fact that if the data comes back in my favor, I will be able to iterate on it or even reverse course, regardless of the power dynamics at the time. Similarly, as a supporter of the legislation, the urge to tamper with my proposal is limited since doing so weakens the evidence for my experiment.

One of the cornerstones of this approach is making data collection an integral part of any law or policy. It's crucial to accurately measure the benefits and costs of the changes being proposed. While I am generally wary of imposing strict rules on data collection, allocating a proportion of the cost or benefit towards this endeavor seems reasonable. For instance, if the proposed cost of implementing a piece of legislation is $1M, and the projected benefit is $10M per year, dedicating 1% of the proposed net benefit to data collection and analysis would be sensible. Even at these low stakes, this represents a significant increase in funding for data collection and analysis.

The potential for unlocking all these data sets is immense. Providing a wealth of high-quality data for analysis not only fosters an industry to generate and analyze this data but also has incredible knock-on effects. The current struggle to find regularly updated and timely research from government sources could be alleviated by setting up a comprehensive system that utilizes standard data collection, formatting, and tagging techniques. This approach would not only facilitate further research but also lead to the organic emergence of new law hypotheses from prior legislation. The creation of a massive, standardized, and accessible database of research data is not just an administrative improvement; it's a paradigm shift that can transform the landscape of policy-making and governance, shifting it towards a more empirical, data-driven model and providing grist to the mills of academic research and private enterprise built on top of open data.

So far I’ve used toy issues but take something like whether we should create manufacturing jobs or reduce tariff and non-tariff barriers to trade. Both sides probably have something valid to say, concerns to address, and outcomes they care about. Both sides also probably really believe in their cause and have some shared goal even if they couch it in different terms. But even diametrically opposed parties have an interest now in setting up a common framework for collecting data on the problem. Which inevitably leads to figuring out how to measure the thing in question.

With this approach we’ve moved the battle from what the solution and nature of the problem is to a battle over efficacy. There’s no longer any incentive to half-bake and compromise a solution since that weakens your hypothesis, instead the battle shifts to what and how to measure the outcome.

Instead of opposing parties trying to reconcile opposing aims in the solution they now have a shared goal of being able to accept or reject a hypothesis through effective experimentation.

You also lose the winner-takes-all nature of politics where each party tries to jam through all that they can while they have control since now there is a direct feedback mechanism where if you jam through something ineffective it will come back and reflect poorly on you and if you are in opposition even if you don’t get what you want you can be comforted by the fact that the evidence will turn in your favor and invalidate the opposition.

It also encourages experimentation. While some purists may frown at the rules not applying equally to everyone it is much easier to propose an approach that is limited either to the population it affects or the time it is in effect. It is better to propose legislation that clearly defines a testable hypothesis than have it apply universally out of the gate. For instance, a hypothesis about replacing existing welfare programs with unconditional cash transfers could be tested on a subset of welfare recipients rather than a universal solution that only replaces a subset or fraction of benefits.

This approach might not work with as cynical a party as the NRA but take even something like abstinence-only education. Pro-abstinence parties are going to make little progress explaining how many unbaptized babies you can fit upon a pinhead. Anti-abstinence parties are going to make little headway talking of personal empowerment. But maybe, just maybe, they can come to a shared framework of something like high school graduation rates and children born out of wedlock by age cohort that allows both sides to test a hypothesis that they believe supports further legislation.

Even if it all fails. We’ve collected data and we can iterate on it again within a framework. We won’t move 100% of the folks on an issue but if we can move 20% the conversation shifts and the side that’s on the losing side of the evidence has to either find a way to justify the discrepancy in the data, find a new justification for their belief, or abandon their position. This approach wins not because people see a data point that contradicts their priors and changes their minds but rather evidence accumulates in a collaborative process in which they are an active participant.

Another benefit is we’ve taken away the stigma of failure. If my hypothesis is rejected, I’ve learned something and gained knowledge not lost my reputation.

Adaptive Policy Trials

Another way to envision the end goal of this approach is by drawing a parallel with the evolution in medicine towards adaptive clinical trials.

An adaptive design is defined as a design that allows modifications to the trial and/or statistical procedures of the trial after its initiation without undermining its validity and integrity.[8] The purpose is to make clinical trials more flexible, efficient and fast. Due to the level of flexibility involved, these trial designs are also termed as “flexible designs.”1

Adaptive clinical trials have significantly advanced medicine by embracing flexibility, permitting real-time adjustments based on interim results without undermining the study's integrity. This adaptability allows promising interventions to be refined, more widely implemented, and approved more swiftly, ultimately benefiting patients and enhancing outcomes. In a parallel manner, adopting an adaptive framework in policy-making can lead to more responsive, effective, and beneficial policy changes. It ensures that legislation can swiftly adapt to new findings and evolving societal needs, thereby broadening the reach and impact of well-informed policy decisions.

Central to the success of an adaptive policy-making framework is the robust collection and analysis of data. Just as the precision and reliability of data are paramount in adaptive clinical trials, ensuring accurate and timely adjustments, a similar commitment to rigorous data practices is essential in the realm of policy-making. Comprehensive data collection and sophisticated analysis not only provide the empirical basis for initial policy formulation but also enable continuous monitoring and iterative refinement. This process ensures that policies are not static but are living entities that evolve in response to new insights and changing circumstances. By establishing a robust data infrastructure, we empower policymakers to make informed decisions, validate the effectiveness of interventions, and adapt strategies proactively, thereby enhancing the overall impact and responsiveness of governance.

We now have a consensus-driven hypothesis to test. We have a policy in place that we believe will influence the outcome of this hypothesis. A robust data collection system is set up to systematically measure and analyze the results. And, as we acquire and analyze data, we are ready to flexibly adapt our design.

Expanding Policy Reach: If we reject the null hypothesis, indicating that our policy has a positive impact, we can consider widening the scope, reach, or extent of the policy to magnify the benefit.

Revising and Iterating: Conversely, if we fail to reject the null hypothesis, suggesting that our policy may not be achieving its goals, we are not bound to an endless cycle of policy ping pong. Instead, we can revise our original hypothesis, propose a new, more focused trial, or perhaps decide to put an inconclusive or ineffective policy to rest.

Imagining the culmination of this approach, I foresee a system where prior evidence directly influences the passage and repeal of laws. If a hypothesis demonstrates promise, the affected population or the scale of response could be automatically adjusted, fostering a more responsive and dynamic legislative environment. Alternatively, the criteria for passing or renewing legislation could evolve to be more evidence-based; for instance, legislation supported by robust experimental evidence might require a lower threshold, say 41%, rather than the conventional 51%, to pass.

Two-Way Door Legislation

When considering the practical application of our adaptive policy-making approach, it's essential to assess the reversibility of decisions. Borrowing a concept from Amazon, we can categorize decisions as "two-way door" or "one-way door." Two-way door decisions are those that are modular and easily reversible. For example, changing the organ donation process to an opt-out system involves minimal costs and processes, making it a reversible, two-way door decision. On the other hand, decisions like altering the US power mix to include more nuclear energy represent one-way door decisions due to the significant fixed costs and long timescales involved, making them difficult to reverse.

In situations where we face a one-way door decision, the best strategy is to refine our approach to make the hypothesis more testable and iterative. Can we examine a more limited hypothesis? Is it possible to test our hypothesis through random sampling without applying it universally? While we can never entirely eliminate one-way door decisions, we can certainly make them less frequent and ensure that when they arise, they are backed by substantial evidence. By making our hypotheses more testable and iterative, we enhance the adaptability of our policies, even in scenarios where decisions are less reversible. This approach ensures that every policy, regardless of its inherent reversibility, is subject to scrutiny, experimentation, and evidence-based refinement.

Conclusion: From Hypothesis to Testing

How do we bring the Law as Hypothesis Testing from a theory into practice?

The litmus test for a framework's value lies in real-world application, echoing as always, E.M. Box’s “all models are wrong, some models are useful”. We need the amateur scientists and innovators in policy-making to adopt this approach, experiment rigorously, and observe outcomes critically.

Benefits of this approach:

Scale Invariance: This approach is adaptable, fitting policies of any scale, from local to national. It aligns data collection and analysis with the policy's scope, budget and anticipated impact, ensuring that even modest initiatives are grounded in empirical evidence.

Compatibility with Existing Legislative Processes: This framework doesn't necessitate an overhaul of the legislative process. It encourages policymakers to frame hypotheses, design experiments, and embed scientific rigor in their work and thinking but it does not preclude drafting traditional legislation that incorporates these elements. This compatibility ensures that even amidst political compromises, the core of a testable hypothesis can endure.

But the elephant in the room remains: What’s the incentive for existing actors, politicians and bureaucrats to depart from the status quo and embrace this novel approach?

Societal Improvement: Perhaps some naive optimism is in order. In an era where trust in the ability and confidence of government is eroding, some politicians may genuinely seek a framework to improve legislative outcomes while improving flexibility and responsiveness.

Double Down on Success or Pivot on Failure: This model allows policymakers to responsively amplify successful initiatives or pivot from failing initiatives in a rapid, data-driven, adaptive manner.

Political Cover and Escape from Gridlock: By centering politics on empirical measurement, it offers politicians an escape from political gridlock and rigid party lines. They receive cover for unpopular decisions by letting the evidence tie their hands. And can shelter from criticism under the aegis of eventual vindication by the evidence.

Indulge Pet Causes: Politicians passionate about specific issues can incorporate measuring these into the experimental framework, ensuring their concerns are measured and addressed without the excesses of traditional pork-barrel politics. It is cheaper to measure a pet cause than implement and administer it as law.

Let’s advance open societies by bringing the scientific method and empirical testing to bear on the law. Let’s refine this framework together and engage decision-makers and legislators to urge them towards a future where governance evolves through data, evidence, and continuous innovation.

Both illustrations back up your points about systematic analysis and testing for public policy. & how I wish you were calling the legislative shots!